Introduction

Imagine you’re a librarian of a vast library of precious books. What keeps you up at night? How would you protect the books from threats? You might worry about fires, floods, or people trying to steal or destroy what’s inside. But what if the biggest risks weren’t outside the library at all—what if they lived inside it, in the everyday decisions and limits of the librarians themselves? How would you keep everyone safe, including the people doing the protecting?

These are the kinds of questions that guided our work with Throneless Tech on Bitpart, a secure communications platform for journalists, activists, and human rights defenders. This work was part of the UXD Lab, supported by the Open Technology Fund. Before its launch, we worked together with Throneless Tech to design and stress-test the platform’s prototype. By combining Disaster Games with Personas Non Grata— methods used to surface failure modes, threats, and risks—we learned that you can’t secure a “library” if you don’t account for the stress, burnout, and humanity of the people caring for the books and holding the keys.

The Problems We Aimed To Solve

There’s no shortage of threat modeling frameworks. STRIDE, attack trees, and data flow diagrams offer structured ways to surface technical vulnerabilities and answer questions like, what can an attacker do to our assets, and where might the system be weak? Those tools are valuable when you have a relatively stable product, team, and architecture, and you mainly need to harden endpoints, restrict access, or perhaps patch dependencies.

Bitpart was not that kind of project. It was an early-stage prototype for high-risk users, built by a small developer co-op balancing multiple commitments, in environments where “security” has to include legal, social, and organizational realities—not just encrypted databases and hardened APIs. So we needed to understand threats that don’t show up neatly in predefined categories: burnout, coercion, overwork, and single points of human failure.

That meant offering space for participation, not prescription. A prescriptive use of a traditional framework might have produced a solid list of vulnerabilities to fix, but not a shared understanding of why they mattered in this context or how new ones might emerge over time. Instead, with the team, we were aiming to build threat literacy specific to Bitpart: a shared capacity across users, administrators, and maintainers to think proactively about risk.

Existing frameworks acknowledge concepts such as insider threats and social engineering, but they usually frame humans as attack vectors or points of failure. We were focused on what could go wrong with the people and how that would cascade through everything else. That required empathizing with the exhausted, stressed, multitasking people operating and sustaining the platform and treating their cognitive limits and working conditions as core security concerns, not necessarily edge cases.

Our Approach

Making space

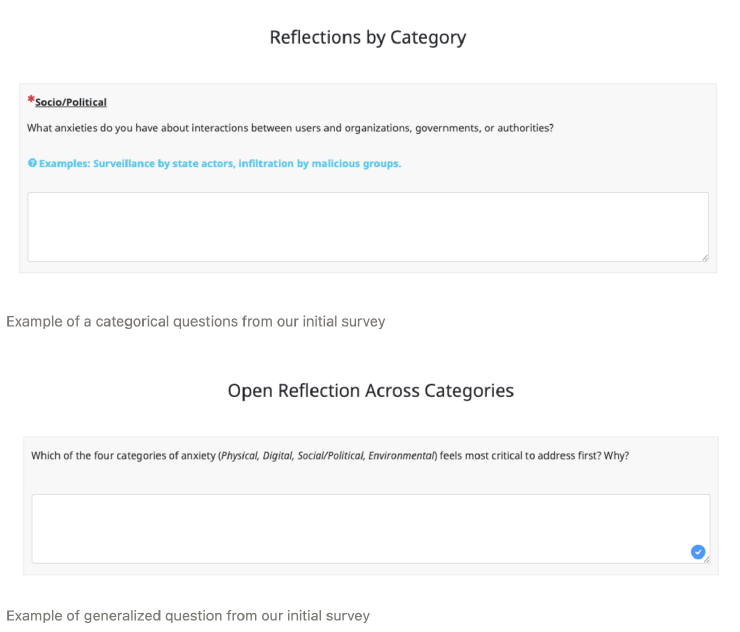

To begin, we anonymously collected team members’ anxieties using a short survey before the Disaster Game workshop.

We wanted to give all team members the space to communicate the disasters they see as possible and the anxieties they hold about Bitpart. We would later prioritize the responses we compiled from the survey during the workshop.

Priming the team

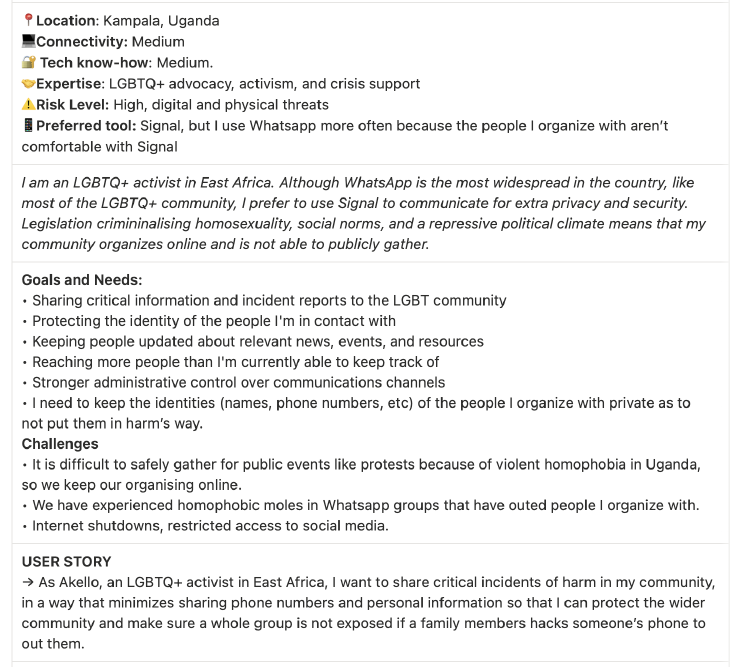

To begin our workshop, we decided that before we even mentioned threats or any consequential risks we had gathered from our short survey, we should facilitate a review of the user personas from our earlier research. These were not demographic composites but instead personas of end users and admins with concrete scenarios about how they would actually use Bitpart under pressure. In taking a moment to do this from the outset, we were centering human experience to prime the team’s imagination. I believe this was an important step for a product prototype. Meaning rather than talking about Bitpart users in the abstract and/or hastily crafting scenarios on the spot, we were prepared to ground the exercise in specific people, contexts, and constraints. That way, later, whenever we asked, “What could go wrong?”, the question would be anchored by something shared and concrete.

Meet Akello: Online Organizer

Playing With Disasters

With those personas in mind, we continued on with the Throneless Tech team. We deliberately steered the focus away from the “easy” disasters—server crashes or widespread data breaches—and instead, focused the team on clear stress cases (i.e. catastrophes like arrests, device seizures, admin abductions, and funding cuts). As the team played the game, it focused on these “likely” catastrophes, threats, and vulnerabilities that surfaced across end users, admins, and maintainers—the platform’s whole human stack.

After the Workshop

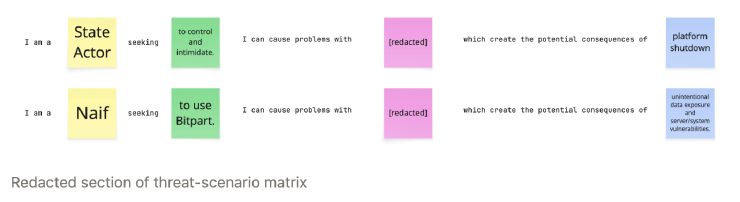

At the close of the workshop, we shifted to an “actor-based” threat-modeling approach. That is, after composing and sorting the most likely disasters for Bitpart, we helped the team decompose one of those disasters to properly identify potential threat actors within an inflection point or catastrophe. So after the workshop, I continued with this process for the rest, ultimately grouping them into a threat-scenario matrix that detailed all the actors, their goals, potentially affected system areas, and potential consequences.

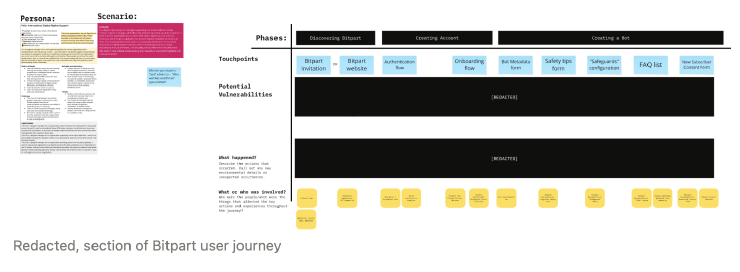

I used this as input to take a more process-based approach to understand the threats in the specific conversational flows and the larger arcs of user journeys. Using the Personas Non Grata, I stepped through both conversational flows and journeys to see exactly where and how a disaster could emerge from a threat actor at a specific interaction (i.e. site of vulnerability).

What We Discovered

Safety and Care Throughout the Whole Human Stack

The workshop reframed where safety and care “live” in Bitpart. As the group played through these catastrophes, something important emerged. The threats they identified didn’t cluster around a single type of user. Vulnerabilities showed up across three distinct but interdependent layers:

End-users whose devices and identities could be exposed, Bitpart Administrators whose judgment and capacity shaped how safely Bitpart was operated, and the Maintainers whose ability to sustain the project determined whether any of that protection would exist over time. A failure in any one of these layers could cascade into the others; e.g., an overworked admin making a rushed decision could expose subscribers, or a burned-out maintainer could leave critical vulnerabilities unpatched.

What became clearer was that a “harmful librarian” is almost always a system failure, not a personal failure. People might make unsafe choices when systems they use lean too heavily on their memory and attention. Furthermore, without giving them adequate support or guidance, these people are likely to sacrifice their own well-being under pressure.

Overall, Disaster Games worked not because it produced a better checklist, but because it emotionally simulated what panic, stress, and overwhelm might feel or look like in practice. We weren’t just thinking through risks; we were feeling what it would mean to be the “librarian” while everything went wrong. That simulation surfaced vulnerabilities that no audit alone would have revealed.

Personas Made Threats More Designable

We surfaced a lot of emotional content during the workshop: fear of legal retaliation, worry about making mistakes under pressure, and anxiety about being the only person who knows how a specific system works. The patterns in those fears told us where the team already had awareness of Bitpart’s fragility—places where responsibilities were vague or where safeguards relied too heavily on individual heroics. We turned those anxieties into Personas Non Grata; we weren’t inventing new threats; we were giving shape to the risks the team already felt but hadn’t yet named.

Once those anxieties were crystallized into concrete personas—the coerced admin, the overextended moderator, the solo maintainer—they became design inputs. With the team, we could ask targeted questions like, what happens in this flow if the admin is exhausted? What if they are under legal pressure? What guardrails or prompts in the UI would help? This was an important shift, since it turned abstract anxieties into specific design opportunities. Meaning the team could make design decisions more effectively. Examples could include clearer defaults, better documentation, safer handoff points, and ways to reduce the cognitive load on people operating Bitpart under stress.

Sustainability is a Risk

One of the most important discoveries was that project sustainability itself emerged after the workshop as a security problem, not just an operational one. Through the game and the subsequent mappings, it became clear how much of Bitpart’s safety depended on some people continuing to have time, energy, and funding to maintain it. The lack of contributors was not just a potential problem of governance; it was an existential threat to any user who might rely on Bitpart, since an unmaintained security tool quickly becomes a dangerous one.

Here, the divergent exploration of “everything that could go wrong” in the Disaster Game helped prevent tunnel vision and provided a diversity of ideas. By opening up to a wide range of socio-technical catastrophes (and only later converging on the most likely and impactful ones), we weren’t fixating on a single, familiar category of risk (e.g., external attackers) and missing slow-moving threats like maintainer exhaustion or funding gaps. This insight led directly to another workshop focused on mapping the Contributor Persona and Journey with conversations about documentation and governance as forms of safety and care work.

The Outcome

Again, the most important outcome wasn’t a checklist of issues; it was shared threat literacy across everyone working on Bitpart. Now, Throneless had a common vocabulary for talking about risk at the end-user, administrator, and maintainer layers, with a clearer sense of how failures in one layer could cascade into the others.

Combining Disaster Games and Personas Non Grata turned ambient worry and rumination into actionable design questions. Instead of the vague sense “someone is at risk,” the team could now ask, what happens in this flow if the administrator is overwhelmed? What guardrails or defaults could better support them in this moment? This led to concrete changes: clearer defaults in flows, safer handoff points between the platform and users, and a renewed focus on documentation as a way to reduce cognitive load.

Finally, the team recognized that their nascent platform’s security depended heavily on a few people continuing to maintain and fundraise for the project. That realization led directly to the team treating governance and contributor onboarding as forms of safety and care work for the communities Bitpart aims to serve.

Credits

With thanks to Open Technology Fund for supporting this work.