My recent post describing some of the reasons we choose Slack over IRC for our public forum is part of a larger conversation people are having around the promise and concerns of group-communication tools. A quick search for “Slack vs. IRC” yields a wealth of opinions on the subject; our post generated some interesting discussion (and a couple angry rants on Twitter).

I focused my discussion on the usability advantages of Slack – advantages that I believe encourage designers to join our public forum in a way that they would not if it were hosted on IRC. Simply Secure is about bridging the gap between the technical and design/research communities to get more human-centered thinkers working on open-source privacy-preserving tools. We can’t do that if we continue to tell designers that they have to communicate using tools they hate, and the OSS community’s expectation that they do so is one reason open-source tools are still so painful to use.

Buried at the end of the post was another point that deserves more attention: “But for the meantime, this abstract threat does not outweigh the benefits Slack offers, especially when one ponders how often both Slack and its open-source alternatives realistically undergo regular security reviews by skilled engineers.”

It’s critical to observe that we can’t assume that open-source tools are always – by virtue of them being open source alone – the most secure in practice.

Open source alone is not enough

“What?!”, you might be yelling at your screen. After all, we all know that opening source code to the light of day allows the public to hold developers accountable, and prevent both unintentional bugs and all-too-intentional back doors.

But, you have to ask yourself: how many security audits have you personally performed of the open-source tools you use? (What were the results? Did you do a follow-up a year later?) How many IRC clients have bug bounties? How many of the open-source tools we depend on have anyone with security expertise reviewing their code – much less neutral third parties who aren’t part of the team that wrote it?

The answers, of course, are not pretty. Even projects new and old with an explicit security focus suffer serious bugs that would arguably have been caught by a thorough security review. We’re still exploring the world of Slack alternatives, but a recent review listed “empty test suite” as a problem with three of the five products it considered. If a team doesn’t have the resources to build automated tests into their development cycle, what confidence can we have that they are doing their due diligence with respect to security?

Dealing with resourcing realities

Big closed-source organizations like Slack clearly have the leg up in this domain; a quick search on LinkedIn reveals at least a handful of Slack engineers whose primary focus is security. This is slowly changing in the open-source world; efforts like the Core Infrastructure Initiative and OTF’s Red Team Lab (currently accepting applications; contact [email protected] with questions) provide support to open-source projects seeking to evaluate and improve their security posture.

It’s not enough to shake our collective, outraged fist and say that open-source projects would fulfill their maximally-secure destiny if only they had more resources. And I agree, of course, that there are considerations beyond code-level vulnerabilities that should give any user pause when considering a tool like Slack. Security is not a binary property, and a cloud-based solution hosted by a third party is too risky in the context of many organizations’ threat models.

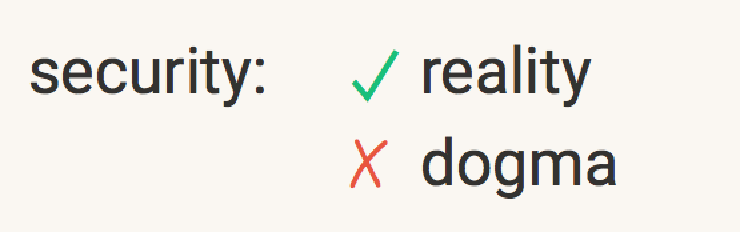

Open-source is good; avoiding dogma is better

So if you’re an organization that has the technical resources to host your own solution, and you find one that is truly accessible to your users (or your users have the time and patience to work with the developers to improve it), that’s great! If you do use an open-source tool, please contribute back to the project so its developers can continue in their good work. This is the ideal outcome, and the one that will lead us to the best privacy and security posture over time.

But, in the meantime – and no matter your threat model – please take an honest look at the pros and cons of any solution you consider, and think critically about whether a development team practices the values that they preach. Open-source solutions are great, but only if they will really meet your needs, or can be adapted to in a reasonable amount of time to do so. Just because something is open-source doesn’t mean that is it necessarily has fewer security vulnerabilities than a closed-source solution. Espousing otherwise – especially to organizations with limited technical capacity – is irresponsible.

Don’t let security dogma get in the way of your assessment of security reality.

Thanks to @isa for a recent conversation on the topic that inspired this post, although please don’t blame her for my conclusions.